Problems tagged with "lecture-12"

Problem #129

Tags: backpropagation, quiz-06, neural networks, lecture-12

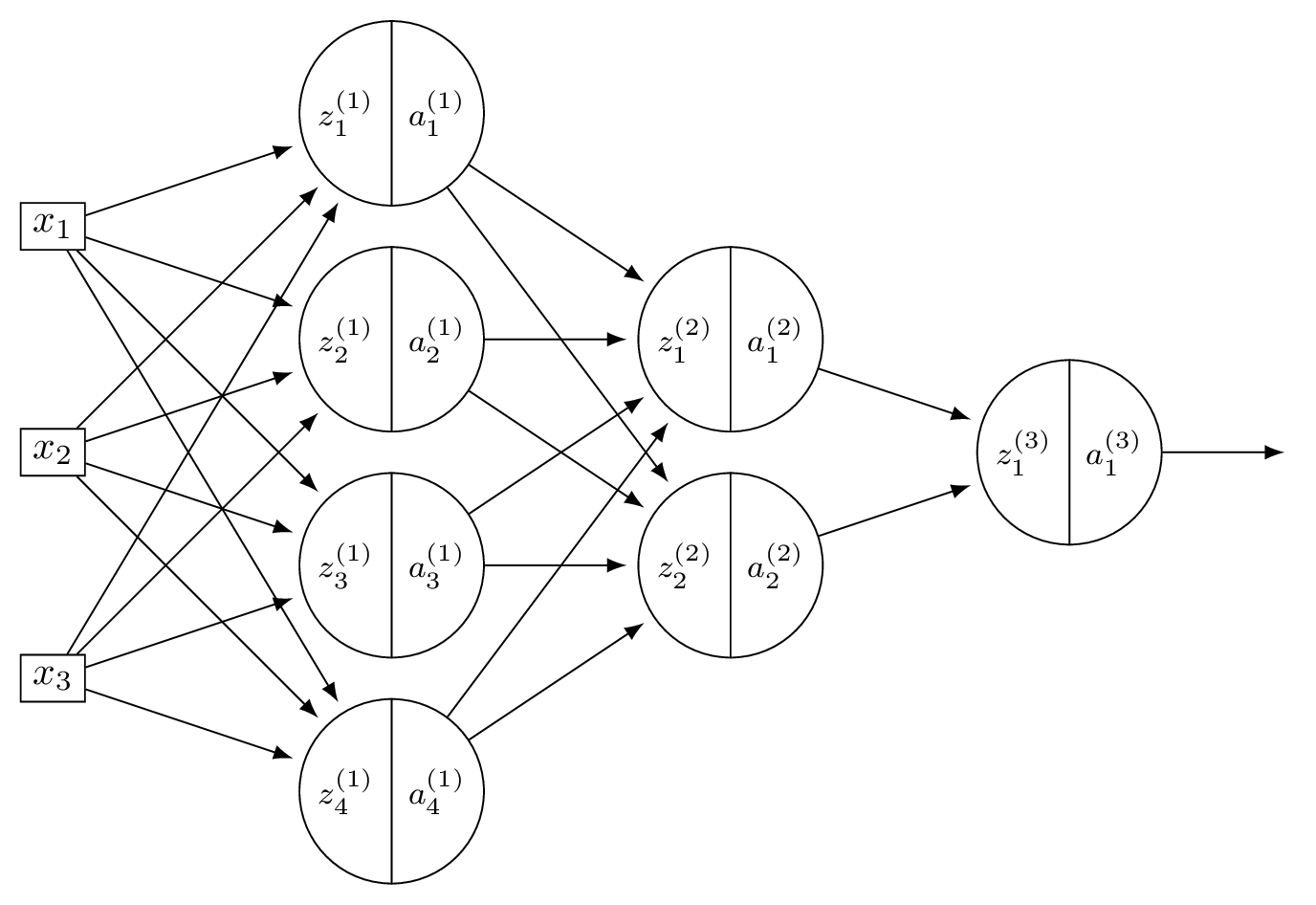

Suppose \(H\) is the neural network shown below:

You may assume that all hidden and output nodes have a bias, but that the bias is just not drawn for simplicity.

The gradient of \(H\) with respect to the parameters is a vector. What is this vector's dimensionality?

Solution

Count all weights and biases. Layer 1: \(3 \times 4\) weights \(+ \; 4\) biases \(= 16\). Layer 2: \(4 \times 2\) weights \(+ \; 2\) biases \(= 10\). Output: \(2 \times 1\) weights \(+ \; 1\) bias \(= 3\). Total: \(16 + 10 + 3 = 29\).

Problem #130

Tags: backpropagation, quiz-06, neural networks, lecture-12

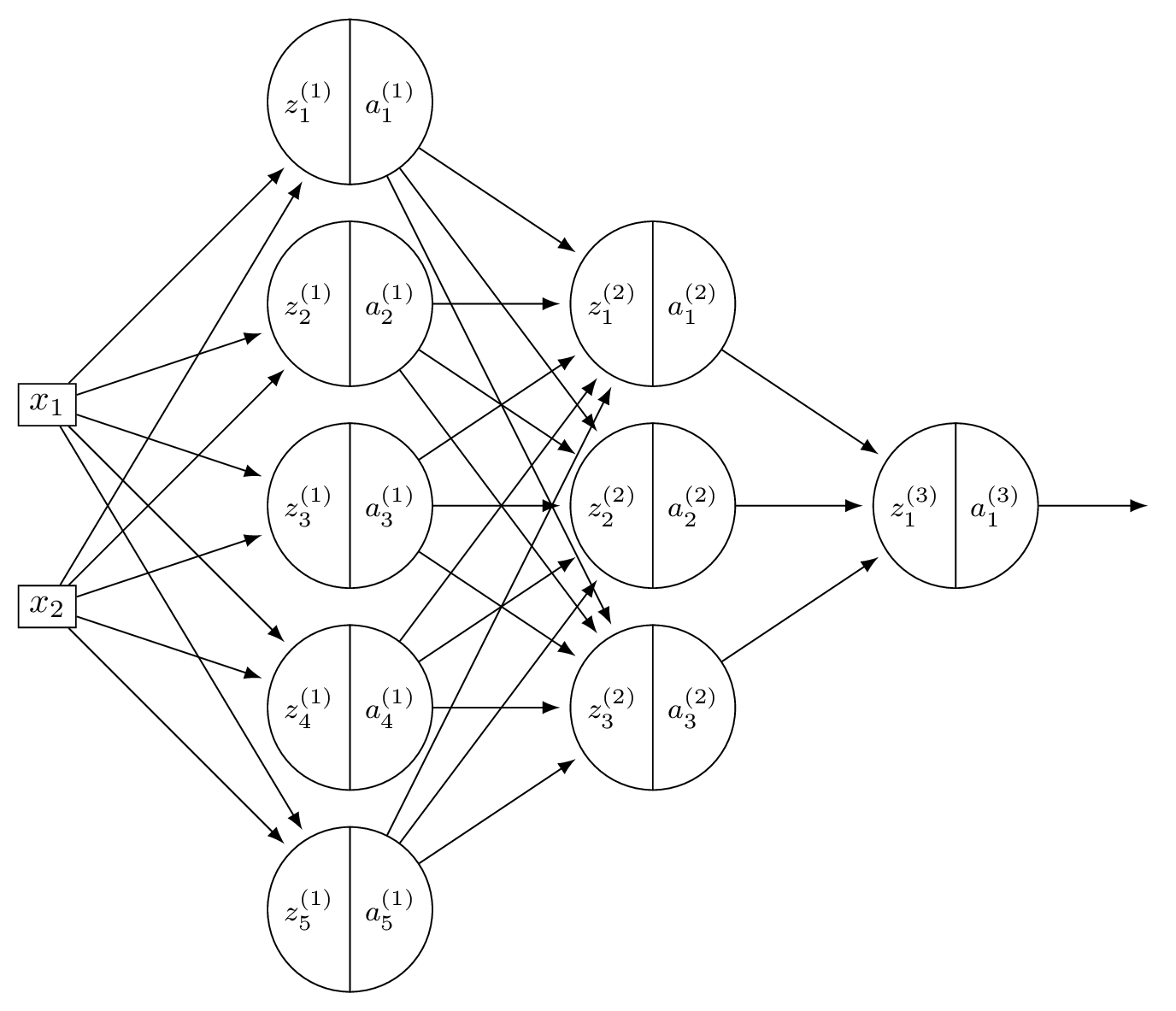

Suppose \(H\) is the neural network shown below:

You may assume that all hidden and output nodes have a bias, but that the bias is just not drawn for simplicity.

The gradient of \(H\) with respect to the parameters is a vector. What is this vector's dimensionality?

Solution

Count all weights and biases. Layer 1: \(2 \times 5\) weights \(+ \; 5\) biases \(= 15\). Layer 2: \(5 \times 3\) weights \(+ \; 3\) biases \(= 18\). Output: \(3 \times 1\) weights \(+ \; 1\) bias \(= 4\). Total: \(15 + 18 + 4 = 37\).